What are Thermoform Multi-Cards and Why Do I Need One?

February 4, 2021

Stainless Steel 316 vs 304

April 20, 2021OPERATING SENSITIVITY

There are a wide range of variables determining the operating sensitivity of a metal detector. This, of course, affects its ability to detect various types and sizes of metal. Understanding these influences is important to get the best your metal detector can achieve.

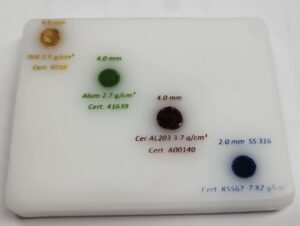

Basically, operating sensitivity is the metal detector’s ability to discover a contaminant based on its specific kind and size metal. The greater the sensitivity, the smaller the fragments of unacceptable metal it can locate. But setting the sensitivity too high can create false positives. That means lost product on the production line. Peak function is expressed by the diameter of a test sphere and the specific type of metal. In the food industry, 4 categories of metal are hunted in processing food: ferrous, non-ferrous, aluminum and stainless steel 316. When the metal detector is operating at its best, the smaller pieces of metal it can identify. That archetypal operation, however, can be complex because of an assortment of dynamics.

Basically, operating sensitivity is the metal detector’s ability to discover a contaminant based on its specific kind and size metal. The greater the sensitivity, the smaller the fragments of unacceptable metal it can locate. But setting the sensitivity too high can create false positives. That means lost product on the production line. Peak function is expressed by the diameter of a test sphere and the specific type of metal. In the food industry, 4 categories of metal are hunted in processing food: ferrous, non-ferrous, aluminum and stainless steel 316. When the metal detector is operating at its best, the smaller pieces of metal it can identify. That archetypal operation, however, can be complex because of an assortment of dynamics.

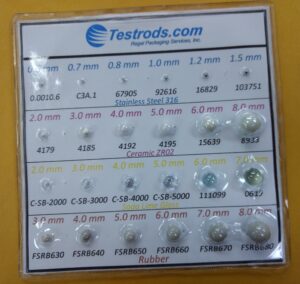

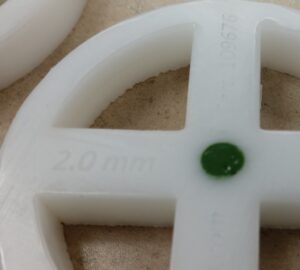

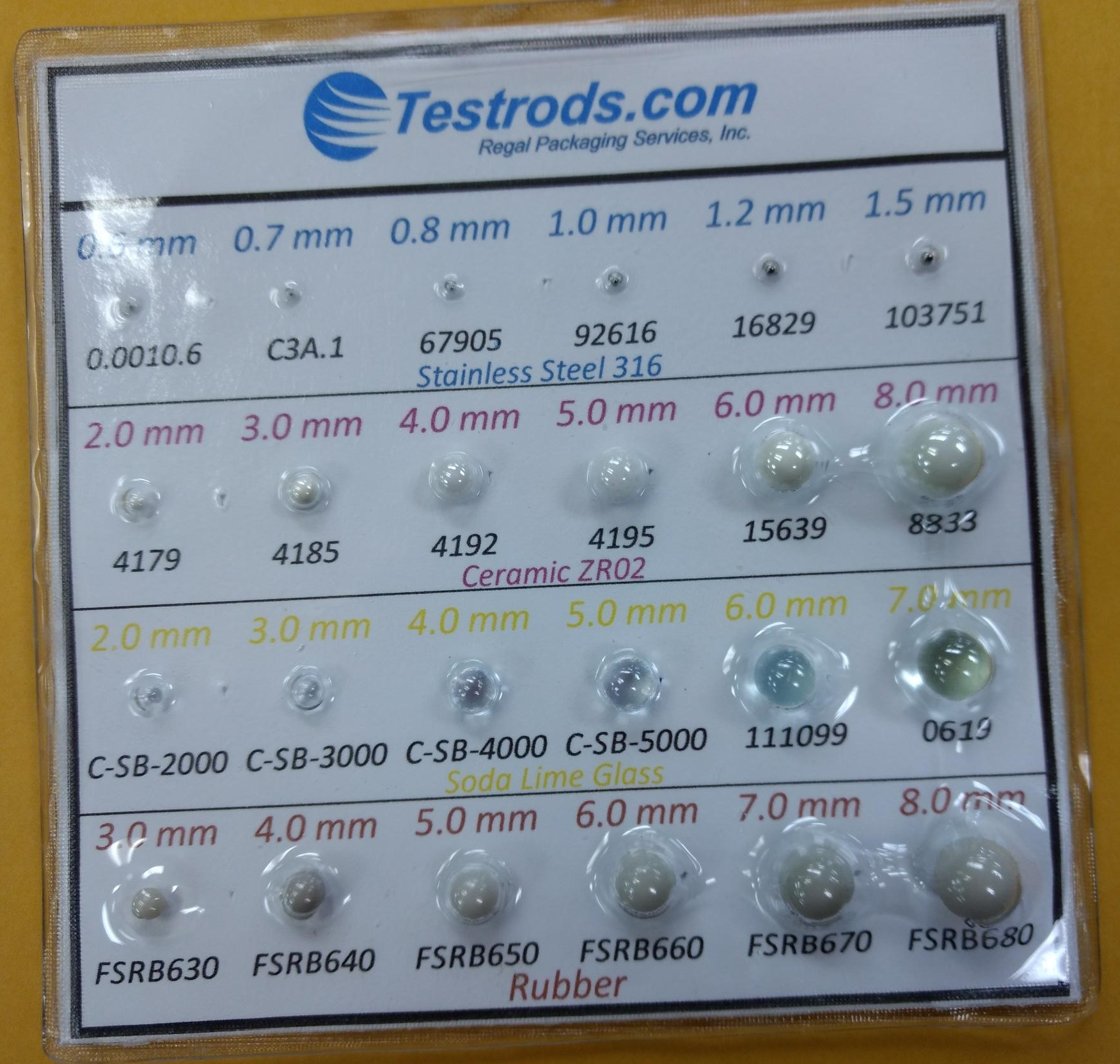

Diameter of the Test Sphere

The diameter of a ball is most often used to identify a metal detector’s operating sensitivity. For instance, a detector that can locate a 0.6mm ferrous ball is more sensitive than one that can only detect 1.0mm. The lower the number the better, that is, assuming no false positives. What is not true is to say that a detector that can “see” a 0.6mm contaminant is twice as sensitive as one that can only see 1.2mm. That’s because detection is based, in part, on surface area. A 0.6mm ball has a surface area of just 1.132mm2. The surface area of the 1.2mm ball is 4.524mm2. This means the better detector is nearly 300% more sensitive.

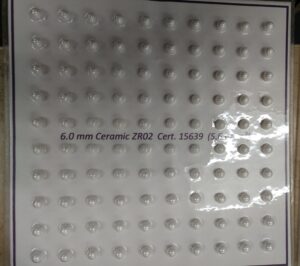

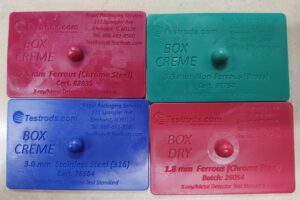

To know the true sensitivity, you’ll need a certified, reliable test piece (see testrods.com). This testing device must be detectable when traveling through the middle (horizontally and vertically) of the aperture of the detector. This is the most difficult location for the metal to be discovered. This is the point at which the magnetic field between the receiving coils is the least sensitive. If the test piece is closer to the wall of the aperture, it will be easier to detect.

But it’s important to keep in mind that there can be a considerable variance between the metal sphere in the test piece and the length of an irregular piece of metal or wire in your product.

HAACP Plans

Any HACCP plan and audit may find a metal hazard of the previously mentioned metals. The sensitivity, however, of the system can vary depending on those metal types. Most often, quality assurance speak in terms of the varying differences of metal. For example, you may hear that the sensitivity is 1.0 Ferrous, 1.5 Non-Ferrous and 2.0 Stainless Steel 316. You might even just hear 1.0, 1.5 and 2.0. The ferrous material is typically the easiest to detect because of its magnetic properties. The Non-Ferrous (Brass) is harder to find and the Stainless Steel 316 (the industry standard) even more difficult. It’s important to test with SS 316 not only because it’s the chosen industry standard, but it’s also the most difficult to detect. If you can find 316, you’ll detect other types, but not the other way around. Still, as with many standards, there are exceptions to this.

We’ve already seen how the metal classification (Fe, NFe, SS 316, Alum) can determine its detectability,. But sensitivity is also determined, in part, by the orientation of the metal as it passes through the aperture. If the object’s cross-sectional area is less than the detector’s sensitivity, then that object will pass through without detection. This is one reason why it’s so critical to get the most out of your metal detector and write your HACCP accordingly.

The Greatest Sensitivity

In order to achieve the greatest sensitivity, you should use the smallest possible aperture possible. This will be determined, of course, by the type of product and its packaging. You’ll need to take into consideration the speed of the belt in a conveyor system. There are also other factors in the case of gravity fed and/or overhead configuration. And you’ll need to make room for the test piece used. Ideally, the test piece will be inserted into the middle of the product, but this is not always possible.

The material used for packaging a product will sometimes offset sensitivity if the material is conductive. This can happen, for instance, when recycled cardboard is used, because it often contains bits of metal. To adjust for that, you might want to decrease sensitivity. That comes at the expense of the product, in which you may be missing metal. The location assigned for testing should be determined carefully. This may be just prior to packing or, in other cases, e.g. when metallized film is required, a solution may be available to conquer the potential issues the material creates.

Environment

Factory plant surroundings can also negatively impact the metal detector’s success in relation to its operating sensitivity. Built-in noise and vibration reduction will minimize the potential interference affecting the metal detector.

The conductivity of the product can cause it to behave the same way as metal when passing through the detector’s aperture. Products with high moisture or salt content, like meat and poultry, can detract from the detector’s sensitivity. This is often referred to as Product Effect. Large products, like large blocks of cheese (which also have conductivity) can be a difficult assignment in reaching the highest levels of sensitivity desired. When a metal detector is setup, the operator should “calibrate” the metal detector to the product. In other words, the detector is set so that when good product is run through, there will no signal created in the detector. “Calibration” is often confused with “Verification.” Technically, a metal detector has no calibratable parts. It is design to validate that it can achieve the levels of metal(s) you pass through it.